Data Science in English - Part I

A straight forward Introduction into what computers can do for you

Author’s note: our goal here is to provide a generally understandable overview of the Data Science landscape largely from first principles and leading all the way to some of the latest breakthroughs in “AI” such as ChatGPT. While the concepts can get complex, this should be approachable for almost anyone who is interested in learning a bit more about Data Science. But if you have any questions, let me know in the comments below!

With much of the recent hype around AI or “artificial intelligence” kickstarted by ChatGPT, it’s important to understand the core mechanics of how such systems are built.

But before one dives into the specifics of a Large Language Model (LLM) like ChatGPT, it’s important to step back a bit.

This article is the first in a series designed to give you an intuitive understanding of what Data Science, Machine Learning, and AI are, such that you could have a useful conversation with someone about them. Implementation details will be light to non-existent here as plenty of great tutorials exist elsewhere. Rather, we are shooting for a mental framework such that you can understand “AI” broadly, have a sense of what it can do, and what it’s fundamental limitations and risks are.

The Start: Data Science

You’ll hear three broad terms to describe this space: Data Science, Machine Learning, and Artificial Intelligence (AI). Depending on who you talk to, some people will use these terms interchangeably while others would bristle at such an equivocation.

For our purposes, we’ll start with Data Science which we will define broadly as the act of deriving non-obvious insights from data and includes Machine Learning and AI as components of the field.

The statement “non-obvious insights” differentiates Data Science from things like “Data Analytics” and “Business Intelligence.” While absolutely useful in their own respects, most analytics and business intelligence has historically relied on showing sums or other aggregations and filters of data sets. Such calculations are what we’ll call obvious insights, while noting that there is still a lot of effort and skill in surfacing the right obvious insights in the right way (which is what good analysts are quite good at).

With that understanding of obvious insights, let’s now look at the domain of Data Scientists: non-obvious insights. Here are some examples:

Which of my borrowers are most likely to default on their loans?

What is the best product to recommend to someone who is about to buy three pounds of peanut butter?

How much revenue should I expect to generate next month?

What is the optimal price to charge for a cup of coffee?

All of these questions (and many more) are business applications of Data Science. Their answers are non-obvious. Even if you could manually read each row of data, or even if you built 80 different reports that aggregate the data, you are not going to answer any of these in a satisfactory way.

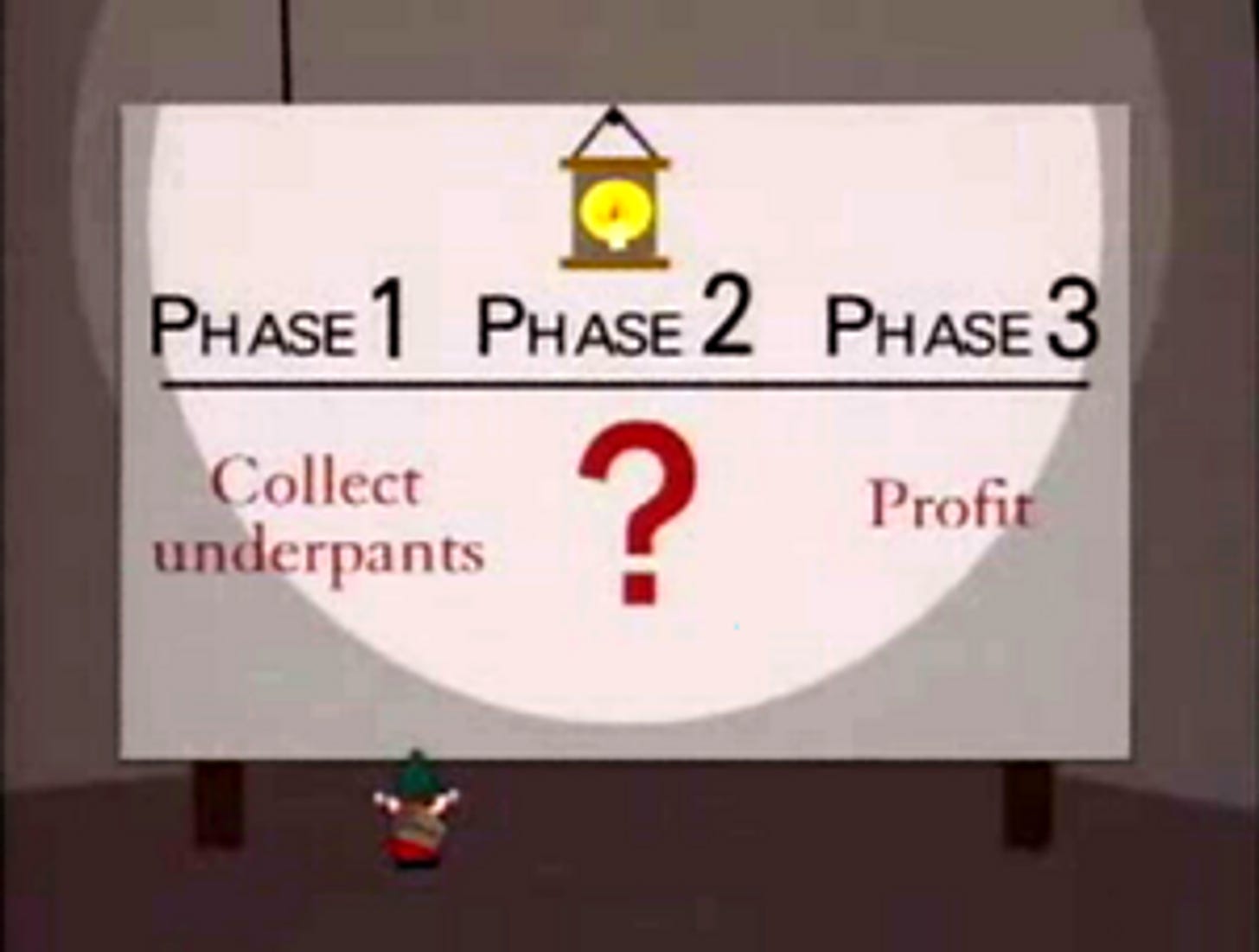

Knowing that, you may be tempted to say “great, let’s just let AI solve this!” This is not the correct response. Data Science and AI systems are not magic boxes that solve problems. Don’t be like the gnomes from South Park:

Any method in Data Science has benefits, drawbacks, and many decision points that can radically influence the conclusion. It’s critically important to understand these tradeoffs and decision points so that you can ensure that the system you create reflects reality and the will of those managing it. So let’s dig in with a basic example:

Basic Machine Learning: An Example

We’ll stick with the first example above. Let’s suppose we are a bank who is looking to model the likelihood of their borrowers to default on their loan. Such a model, once built, could then be used to evaluate new loan applications and determine what rate they should then offer to the borrower.

Let’s start with a general overview of how we’d approach this:

Collect historical data on my loans (as much as possible). Each row would be a loan and each column would be a characteristic (feature) about that loan.

Lastly, because this is historical data, we would have a final column representing the outcome: 1 if they did default on their loan, 0 if not.

We are going to clean up our data and set aside a portion, say 20% of our loans which we’ll use later to validate our model. Call this holdout data.

Now, on the remaining 80% we will turn now use a machine learning algorithm which will try to predict as accurately as possible the outcomes based on the features. This is called training. The algorithm you use here could range from a simple linear (or in this case, logistic) regression algorithm to more advanced algorithms.

Once we have a model that transforms the features into a 0 to 1 probability of default, we can now go and do a couple things:

Validate that model on the holdout data to see if it is similarly predictive to the data you trained the model on.

Try different machine learning algorithms to see if we can get a better accuracy with a different approach.

Once satisfied, setup this model to evaluate the default risk of new loan applications. This would give you the odds that a new loan would default based on historical trends and you could then price an interest rate commensurate to that risk.

Still with me? Good! Each of these steps can get complex, but in concept it’s simple: we have the computer try to minimize the gap (called the error) between its predictions and the observed reality.

The problem we just described is considered a supervised learning problem, which means that we are using an observed reality as the “supervision” and training the model to fit that reality (we’ll get to unsupervised learning later). More specifically, it is a binary classification problem because we are trying to classify each data point (each loan) into being defaulted or not.

Things to Consider

As we mentioned above, machine learning algorithms are not magic boxes that solve your problems. Like everything computers do, they are limited to and influenced by only what the user inputs. Specifically in our case, there are several things to watch out for:

Historical bias - as mentioned above: our model is only reflective of the historical data it was trained on. That may work now, but will it work ten years from now, or will the factors that predict default change by then? In practice, most models are retrained at some cadence to prevent model drift: the slow decay of accuracy seen over time as today’s reality diverges from the past. But that leads us to the second risk…

Overfit - fitting a model too narrowly could lead us to models far too specific. If you just trained on data from March of 2020, you could be swayed by artifacts from Covid that are no longer relevant. Such a model would be awful at predicting things now. This isn’t the only way we can get overfit. We can use too many features in our model - this leads us to include features that aren’t actually predictive but just happen to correlate. One way we combat overfit is the holdout method described above: if your model predicts the 20% it was kept blind to about as well as it predicted the 80%, then you can have some confidence that the model isn’t overfitted.

“Garbage in Garbage Out” - based on the data that you put into the model, your model could be very predictive or not at all predictive of who defaults. If your only inputs were, say, the type of candy that each applicant liked, you’d still get a model that will dutifully output a chance of default. That model may even appear predictive due some random correlation between candy preference and loan defaults. Of course, a candy-based model is absurd, but the broader point is this: the model is simply an optimized prediction based on the data you put in. If your features aren’t very predictive, or if the data has a lot of missing or inaccurate values, you are probably going to end up with a junk model.

Model Bias - because models are simply optimizing to reduce overall error, they can end up arriving at results that systemically over-predict or under-predict certain subsets of data. This gets obviously hairy when looking at cases of say: race of the applicant and the likelihood of loan defaults. Even if you don’t include race in the model, you may still end up with a model that systemically overpredicts the likelihood default in certain populations. To address this, there are various techniques that fit within the Orwellian name of “model fairness,” but the concept is valid insofar as ensuring that there is not systemic errors in your model. Certainly in this case, a bank would be legally and ethically required to investigate this and ensure that their models are not systemically biased.

To address all of the above considerations, a Data Scientist building a model like this will be making tens to hundreds of decisions on the best way to handle each feature, which model type to use, how to validate the model, what timeframes to use, and how to weight accurate vs. inaccurate predictions.

And that is also really just the start. There are many additional edge cases to consider depending on the problem we’re trying to solve.

Taking a Step Back: Conclusion

Bringing it back to the high-level: Data Science is the process of generating non-obvious insights from data. Machine Learning is a subset of Data Science and describe algorithms in which computers “learn” from the data to be able to predict or assess something (e.g. the likelihood of loan default). Specifically, it is a case of supervised learning where we know an objective historical reality and we are telling the computer to optimize it’s model to fit that reality as closely as possible.

That’s it, honestly. And like many things there is a ton of detail under the surface of Data Science. But as it is consistent with my theme of explain simply, it is important to understand the broad high-level concepts before we dig deeper.

A Look Ahead

As noted, what we have covered thus far is just the surface (or the tip of the iceberg): using supervised learning on a binary classification problem. The methods described above are fairly old by tech standards: many of the machine learning algorithms you’d typically use for that were theorized in the 1970’s even if they only became practical and widespread as computing power increased.

But when we see the current hype around “AI” we are now getting into legitimately more advanced concepts that are more recent discoveries. To get to a point where we can understand ChatGPT effectively, we’ll have to advance to newer techniques like Deep Learning, the world of unsupervised learning techniques like Natural Language Processing (NLP), and then finally the arrival of Large Language Models (LLM) like ChatGPT.