The Five C's of AI Prompting

A framework for writing good prompts and getting better answers from AIs!

If you’ve used GPT4 at all, you’ve realized that how you talk to it matters.

Already, concepts such as “prompt engineering” has emerged as a topic and potential career (or at least skill) that is obviously very nascent.

Recognizing that almost no one is an expert in prompting at this point, let me summarize a bit of what I’ve seen, heard, and used so far when talking to the AI (in this case, GPT4) through thousands of prompts. This summary, for ease of recall, shall be branded the five C’s of AI prompting.

General Guidance

Speaking with an AI is not dissimilar to speaking with a human. You should always remember a few things:

AIs do remember context from earlier parts of your conversation (there is a limit to this typically, but for most conversations with GPT4 that won’t be a concern).

AIs are oriented first and foremost to satisfy the ask of the user unless otherwise specified.

Because of these first two points, iteration with AI is incredibly powerful, so you don’t have to get things perfectly right the first try.

Also related, you have exercise sound judgement. Not everything an AI says will be accurate or the “right” way to do things.

The Wrong Way: An Ambiguous Prompt

As noted above - don’t feel that you need to get things perfectly right on the first try. Several of my best prompts are ones that I’ve iterated on over time as I’ve seen things and thought of things later on in a conversation.

That said, most of the strongest prompts I’ve seen give the AI more information rather than less.

If you tell the AI “hey, I want to buy a car, what should I buy?” It is going to reply with some frankly unimpressive outline of a bunch of things you may want to potentially consider when buying a car. It’s not unhelpful, but your lack of clarity leads to an ambiguous response.

So with that said, let’s show the five C’s and how we can make our query better.

To spoil the reveal, here are the five C’s:

Character

Clarity of Objective

Context

Constraints

Critical Questioning

We’ll take these in order.

Character

To start, you should ask the AI to roleplay as a character at the start of your prompt. Why this works well is unclear, but it seems to get the AI “thinking” in certain terms. This may not be needed for quick questions you might ask it, but for anything of substance, setting a character helps the AI orient to the type of advice that you are looking for. Here are a few examples that I’ve used:

“I’d like you to act as an expert medical advisor…”

“I’d like you to act as a Michelin star chef…”

“I’d like you to act as a nutritionist and home cooking expert…”

“I’d like you to act as a Venture Capitalist who is an expert at evaluating early stage companies…”

Another aspect of this that helps from this is that you’ll get an AI speaking in the tone of, say, a Venture Capitalist. In doing so, you can interact with that persona and likely learn along the way about how they think, what terms they use, what questions they ask, etc.

Clear Objective

After setting a character, you need to provide the AI with a clear objective of what you want to get out of the conversation. This can (and should) be fairly broad, as the AI is good at interpreting the broad goal. It’s a fine balance, but if you are asking about a buying decision on enterprise software, you’d probably say “I want you to help me identify the software that provides the best value-to-risk ratio.” Which you may want to clarify further then how you or your company would view value and risk. The point is though, if you orient the AI to your north star, that will ensure that the conversation orients in that way since that context is saved throughout the entire conversation.

Here are some other examples that I’ve used:

“I’d like you to help me craft a healthy weekly meal plan to help us eat healthier and produce food efficiently…”

“I’d like you to assess the following ideas to evaluate their chances of success as a business…”

“I’d like you to provide me career advice with the broad goal of being personally fulfilled and being financially successful…”

And it’s worth noting here: if you just said “I want the best [X] for you/me/us, you’d probably get to a similar point, perhaps because the AI would guide you toward that by asking what “best” means for you and how you evaluate it. That said, put in the thought upfront on what “best” means for you - more than anything else, alignment of your overall objectives is going to influence the outcomes.

Context

After the first two big ones are out of the way, it’s time to lay out some context around why you have the objective that you do. Again, in the case of purchasing enterprise software, it could be explaining what pain points you are encountering or what use cases you have in mind. If you are asking it to prepare your meals for you then it’s going to take explaining what you try to cook each week, what you like, how you currently cook, etc.

As with a human, this context is used by the AI to shade the conversation. The AI considers all the context and adapts it’s perspective to your spot. With the enterprise software example, it’s going to give you very different answers if you tell it you are a mom & pop retailer who has a database somewhere vs. if you say you are a tech startup with 30 software engineers. And it should do this, because the value-to-risk or “best” answer is completely different in those cases.

Examples here would be incredibly varied as to not be entirely helpful. Just describe your situation holistically. I typically use bullet points, but it probably works as a chain of thoughts as well.

Constraints

Alongside context, it is important to note specifically any constraints that you have. Outlining these upfront is worth doing as it narrows down the problem space for the AI. Keeping with the enterprise software example, you could note your budget constraint, a time or team capacity constraint, or the fact that you do or don’t want to use any/all of the major cloud providers.

It’s okay if you don’t know all the constraints upfront: as the AIs are iterative, and you can simply say after the first reply “oh, actually we don’t want to use GCP for this, can you recommend an alternative approach?” But to the extent that you can clump these upfront you’ll get to the point a lot quicker and save time and cost in the process.

Critical Questioning

Lastly, we have critical questioning. And I think this is the most important to practice. After you get back an initial result from a prompt, you are honestly not going to get all that far with simply accepting the response as the word of a higher power and rolling with it.

Maybe you’ll get lucky, but just as if you were questioning a human subject-matter expert, you can and should challenge the AI’s assertion, ask about any caveats or potential alternatives, and more.

This all may seem self-obvious to people who already practice critical questioning of authority in their day-to-day lives, but for others is a bit less natural. No matter the case, it’s still a great area to apply some thought both broadly here and then when engaging with the AI on any particular topic.

While this may have it’s own guide at some point, here are a few questions that I’ve found to be quite helpful in getting to better answers with the AI:

(Include in the original prompt) “Please feel free to ask me any questions that may help you answer this question better.”

“What are the pros and cons of going with Option 2 as opposed to Option 1?”

“This approach seems a little messy, can you think of a simpler way that we could achieve the same goal?”

“Let’s explore Option 1 - can you build a comprehensive outline for me of what that might look like?”

“Is there anything else we might need to consider that I haven’t asked about yet?”

These are just a sampling, but you get the idea, and I tend to find each of these can then spawn its own follow-up questions. Even if we all do now have a super smart AI readily accessible in our pockets, we still live in a world with limited time and limited resources. And in such a world, you are only going to get the value that you ask for.

Conclusion

The bottom line is this: just like any discussion of consequence with a human, the questions you ask and the words you speak may set you up for success or failure. So choose your words wisely!

And this brings me to my last point: to address the concern that the presence of GPT4 will just make everyone dumber as they rely more and more on an AI and less and less on their own brains. Honestly, I find this line of thinking quite rudimentary and flawed. Dealing with AI well requires a lot of critical thinking and skills that engineers, lawyers, and many other high-IQ roles typically leverage to craft strategies or architectures, handle edge cases, and more.

The thinking you can apply now tends to become more strategic and critical as we can now outsource some of the lower-level details (e.g. making a packing list) to computers. Just as we have survived (and thrived) by letting computers do large mathematical calculations for us, so to will we survive (and thrive) by outsourcing more lower-level thought to computers. As these AIs advance further, we may have to re-evaluate this question, but it isn’t anything to worry about at this time.

A Postscript: What Does GPT4 Think?

I asked GPT4 the following, which you can note many of the C’s are incorporated here:

I've just written the below article about how to interact with GPT4. Please act as my editor and a critic with a solid business and technical background. I'll share the article here and then ask you a few questions below.

[Slightly earlier draft of this entire article]

Given that, please let me know:

what do you think of the article as a whole?

are there any points that feel weak and should be reworked?

what are some valid criticisms of the article?

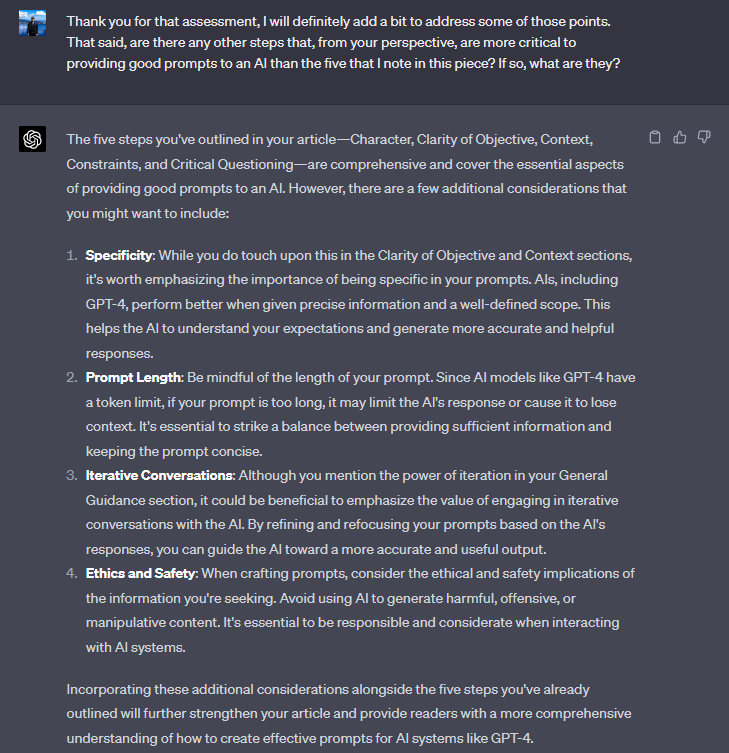

I added a few of their points of feedback, that I won’t bore you with, but I then asked it the equivalent of “is there anything I’m not asking that I should be…” which I will share here as I think it is interesting:

While these are all fair points, I didn’t end up including any of these. Reading through them, I think that is defensible as these are interesting but not critical points to consider. But I figured we should let the AI have a say here.